Deepfakes: Manipulations threaten democracy

How is it possible to find out whether information is real and trustworthy, especially information disseminated via the Internet or social media? The possibility to manipulate videos or photos with the help of artificial intelligence (AI) makes it increasingly difficult to find clear answers. On behalf of European Parliament, researchers of Karlsruhe Institute of Technology (KIT) have now studied potential risks of deepfake technology and developed options for better regulation. Together with partners from the Netherlands, Czech Republic, and Germany, they officially presented their results to members of EU Parliament.

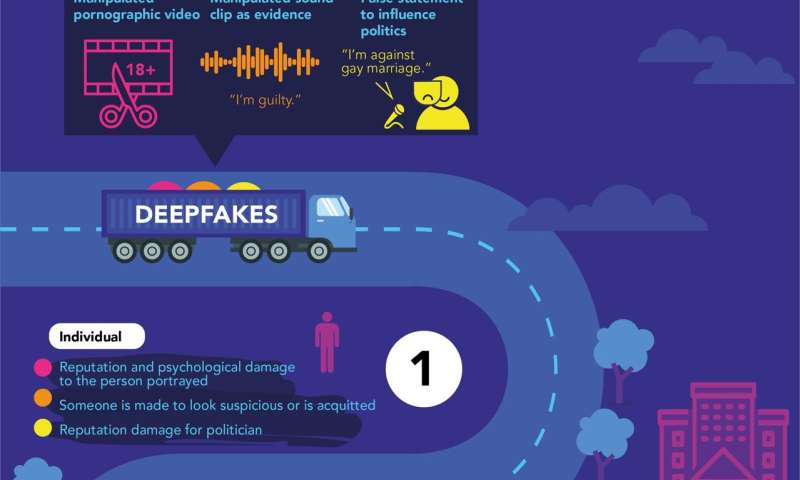

Deepfakes are photos, audios, or videos that appear realistic, but in which persons are placed in new contexts with the help of AI technologies or words are put into their mouths which they never said. "We are facing a new generation of digitally manipulated media contents that can be produced in a very cheap and easy way and look deceptively genuine," says Dr. Jutta Jahnel, who studies the social dimension of learning systems at KIT's Institute for Technology Assessment and Systems Analysis (ITAS). This technology opens up new opportunities for artists, digital visualization at schools or museums, and in medical research, she admits.

However, deepfakes are associated with major risks. This is obvious from the international study presented to the European Parliament's Panel for the Future of Science and Technology (STOA). "The technology can be misused to very effectively disseminate fake news and disinformation," says Jahnel, who coordinated ITAS's contribution to the study. Faked audio documents can be used to influence or discredit legal proceedings and eventually threaten the judicial system. A faked video might be used not only to harm a politician personally, but to influence the chances of her or his party in elections, thus harming the trust in democratic institutions in general.

Critical use of media contents is necessary

The researchers from Germany, the Netherlands, and the Czech Republic propose concrete solutions. Due to rapid technological progress, we should not limit to regulations for technology development. "To manipulate public opinion, fakes do not only have to be produced, they have to be disseminated," Jahnel explains. "Regulations on the use of deepfakes therefore have to start with internet platforms and media companies." But this will hardly eliminate AI-supported technologies for deepfakes. On the contrary, researchers are convinced that individuals and societies will be confronted with visual disinformation more often in future. In their opinion, it will be essential to take a more critical view of such contents and to develop skills to carefully check the credibility of them. The study was made by ITAS and Fraunhofer Institute for Systems and Innovation Research in Germany and the Technology Centre CAS in the Czech Republic. Coordinator was Rathenau Institute in the Netherlands.

Another pilot study of KIT on the society's responses to deepfakes

Based on the European study, an interdisciplinary project of KIT is now focusing on what effective responses of society to deepfakes may be like. In this project, technology assessment experts cooperate with experts of computer science, communication and legal sciences as well as qualitative social research at KIT. Work is aimed at pooling the findings and approaches of the different disciplines and studying the perspective of users in detail.

The complete report, "Tackling Deepfakes in European Policy," is available online.

Provided by Karlsruhe Institute of Technology